AI is rapidly evolving from the early simple tools to increasingly complex agents able to perform reasoning and decision making. As these agents are used for more tasks, the ability to use multiple co-operating agents to perform tasks will become more important, e.g. for tasks that require specialised knowledge or access across domains. This will be possible with custom solutions using dedicated agents or MCP server agents, but large-scale, easy and flexible solutions harnessing the full potential of agents will require a common means for agents to communicate capabilities to each other using a trusted means of communication and collaboration.

Booking a trip is often given as an example of the potential use of agents, because in spite of the fact that travel systems are able to communicate, the user still has to co-ordinate their hotel/flight/train/bus/activities. This will be replaced by specialized agents for each mode of transport and accommodation, but will also require custom agent integrations and interfaces in the absence of a standard protocol for agent collaboration.

The rapidly growing importance and relevance of agents to data systems can be seen in the research paper from the University of California, Berkeley: “Supporting Our AI Overlords: Redesigning Data Systems to be Agent-First”. This paper proposes new research on data systems to support the demands that Large Language Model (LLM) agents will make to manipulate and analyze data. They argue that data systems need to adapt to more native support for agentic workloads to handle the envisaged scale, heterogeneity, redundancy and steerability.

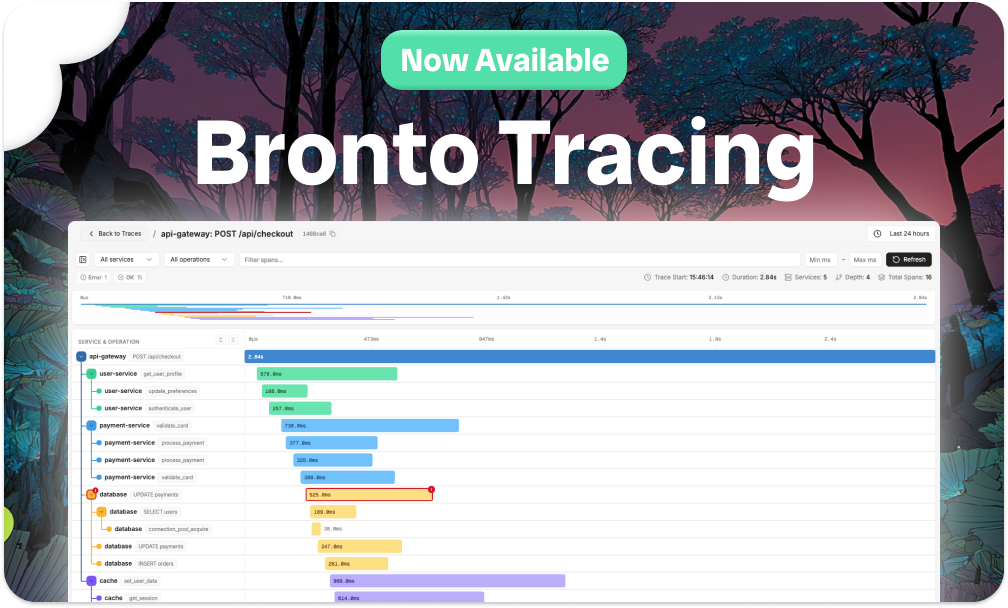

In terms of agent search, the Berkeley paper identifies the need for high throughput and the need to handle heterogeneous systems spanning coarse-grained data and metadata exploration, partial and complete solution formulation, This aligns well with using the high-performance specialised Bronto platform for rapid search of logs as part of a set of collaborating agents.

The term agentic AI has been used to describe many scenarios using agents – I like the definition from IBM that “Agentic AI is an artificial intelligence system that can accomplish a specific goal with limited supervision”, and:

“In a multiagent system, each agent performs a specific subtask required to reach the goal and their efforts are coordinated through AI orchestration. Specifically, they say ” The term “agentic” refers to these models’ agency, or, their capacity to act independently and purposefully.”

The Agent2Agent (A2A) protocol aims to provide the means for agents to collaborate, even when those agents are built by different companies and on different frameworks, as is envisaged in agentic AI. Originally developed by Google, A2A has now been brought under the Linux foundation as an open source project.

This series of blogs on the Agent2Agent (A2A) protocol comes in three parts:

- This first blog will introduce some key concepts about A2A and agentic search and also show how to build a sample A2A “Hello World” agent.

- The second blog will show how MCP can be used in a custom search scenario and show how easy it is to include the Bronto logging platform in this agent scenario with a sample MCP server sitting in front of the Bronto REST API.

- The third blog will extend the sample “Hello World” A2A agent in this blog to enrich the “agentic” search scenario to orchestrate 2 agents and will show how an A2A agent can use an MCP server to access tools, making the two protocols work together.

Overview of A2A

A2A gives agents an open protocol for interaction, built on HTTP, which standardizes how agents exchange messages, requests and data. A2A allows agents to:

- Discover each other's capabilities described in a high level manner without exposing their internal state, memory or implementation

- Negotiate interaction details (such as the type of data – text, forms, media)

- Collaborate on running tasks in a secure manner

A2A is well documented at https://a2a-protocol.org/latest/ and is based on the following key concepts, which will be shown by the helloworld.py sample later in this blog:

- Agent Card: This is the public metadata that describes an agent's capabilities, skills, URL and authentication requirements. It allows other agents or clients to request this card to discover what the agent can do.

- A2A Agent: A2A has two types of agent:

- A2A Server: this exposes an HTTP API endpoint implementing A2A methods and executes tasks on behalf of other agents

- A2A Client: an application or agent that sends requests to an A2A server using its URL to initiate tasks URL to initiate tasks or conversations

- Task: A client sends an A2A message (with its role set to user in the message) to an agent to initiate a task. Each task has a unique ID and a set of states (e.g. submitted, working, input-required, completed.)

- Message: a message contains:

- role: "user" or "agent"

- metadata: optional application data

- parts: an array containing the content. An A2A message consists of one or more parts. Parts can be TextPart (type: "text"):, FilePart (type: "file") (image or binary data) or structured data, e.g. a JSON object

- Defined communication flows - using a defined JSON format:

- Discovery: The client agent discovers another agent by retrieving its Agent Card, usually by a GET to the URL /.well-known/agent-card.json or /agent/authenticatedExtendedCard

- Initiation: The client sends a request with a task id to the server agent to initiate a task

- Processing: The server agent receives the task request which it processes. It may stream intermediate updates (using Server-Sent Events) or the agent will process the task to completion and send a response.

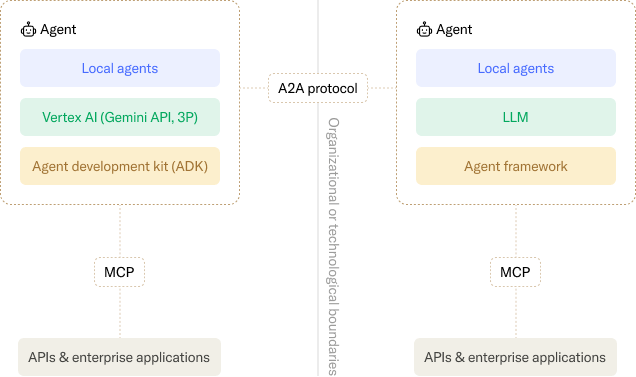

A2A and MCP

The third blog in this series will show an emerging pattern where A2A agents sit in front of MCP servers. In this use case, the Agent Card describes how the agent exposes the tools (or skills) of an MCP server. An agent can use MCP to discover and interact with MCP server tools, while using A2A to communicate with other agents.

MCP and A2A can be compared as below:

- MCP exposes tools and structured data sources to an agent (or LLM). It extends an agent’s capabilities by providing a protocol for agents to communicate to the MCP server which in turn can access databases or the underlying functionality of a product via its APIs etc.

- A2A provides structured agent-to-agent communication allowing multiple autonomous agents to collaborate, delegate tasks, and exchange information using their capabilities exposed via their agent card.

Essentially MCP focuses on standardizing how a single agent accesses tools, while A2A standardizes how multiple agents communicate and collaborate.

- MCP is used in a given context for a particular agent and lacks built-in agent authentication, whereas A2A enables multi-agent interactions by providing secure communication and a way for an agent to publish its capabilities and to discover the capabilities of other agents.

- MCP Tools are generally basic operations with defined inputs/outputs and predictable behavior, e.g. exposing selected API functionality, whereas as A2A is designed for

autonomous agents able to reason, use tools and collaborate with other agents to perform tasks.

MCP and A2A are not mutually exclusive, as AI applications can use both, i.e. agents using specific tools via MCP to perform specific actions and agents collaborating with each other via A2A for more complex problems.

Building an A2A Agent

We will create two simple Python agents for demonstration purposes (not production ready) – an A2A server and an A2A client. These two agents will communicate using the A2A protocol. You could also look at a good step-by-step guide - note the section in that guide “Using the A2A Client Library (Optional)” on using the helper classes in the official A2A GitHub repository and in particular the

- A2AClient class that can handle fetching the agent card and sending tasks

- A2ACardResolver to discover the agent's card

Note that you could also ask an LLM to create an A2A agent and how well that works depends on the quality of your prompt, but I would recommend including an instruction to use the official A2A github at https://github.com/a2aproject/A2A

Installation and Setup

These examples use code from the official A2A github samples repository. It requires the following:

- Python 3.12 or higher.

- UV (Python package manager) – this is optional, but recommended by the A2A project for managing dependencies and running the samples. If you use UV, you can let it handle dependencies automatically, e.g. you can run uv sync (to install dependencies) from the repository's samples/python directory,

- UV will first look for your package (in pyproject.toml), then execute it as a module using Python's -m flag and then looks for and executes __main__.py inside that package. The __main__.py file is a special file that Python recognizes as the entry point when a package is run as a module.

- UV is a modern package manager, but you can use pip and venv to install dependencies manually, although you have to ensure you install any libraries needed.

Running the Sample A2A Agents

Clone the samples A2A repository to download the project to your machine and run the “helloworld” server as below:

> git clone https://github.com/a2aproject/a2a-samples.git

> cd a2a-samples/samples/python/agents/helloworld

> pip install uv

> uv run .

This runs the __main__.py in the local helloworld directory, which includes the definition of an AgentCard, AgentSkill and an extended AgentCard and skills. It calls uvicorn to run a server listening on localhost and port 9999. Requests from agents will be handled by the Agent code in agent_executor.py. The agent card and skills for this helloworld agent are explained clearly here and the agent_executor code is explained here.

In a separate window, run the client from the

> uv run test_client.py

The server will send a response including the AgentCard below describing it as a helloworld with its skills being to say “hello world”

The response will also include the extended agent card and skills as below:

Next Steps

Building a simple MCP Server for Bronto

The next blog in this series will show how to build a simple MCP server on top of Bronto’s REST API and a sample SQL database and demonstrate their use in an example IT scenario.

Building an A2A Agent for Bronto

The final blog in this series will show how to build an A2A agent for Bronto and use that in conjunction with a “Super-Agent” that will also talk to an agent for a SQL database and show how Bronto’s data store, designed specifically to rapidly search and store high volumes of log data and its rich REST API can be a first class citizen in the agentic world.

Summary

We have discussed the features of A2A and how it complements MCP and have also shown how to build basic A2A client and server agents, showing the use of Agent Cards in particular. While trivial, we will use these agents to build more complex agents in subsequent posts and this will show the power of A2A in allowing an A2A agent to interact with any other A2A agent, discover its capabilities and call it to perform tasks. There are also a number of other interesting samples in the A2A github repository.

A2A is built on existing web technologies and its defined communication methods should facilitate the collaboration of agents and allow more complex, capable, and scalable AI solutions to be built. It is, however, an evolving protocol and there are areas where future development is needed to realise that goal of collaborating autonomous agents:

- Multi-Agent Orchestration: Agents will need higher level standardized support to handle complex interactions autonomously, e.g. conflict handling, handling failure across multiple agents, including across organizations, etc

- Shared Vocabulary: There is no agreed/shared vocabulary to define items that may be described over A2A, e.g. a shared definition of a bill, invoice, policy or receipt or standard agent cards for given purposes.

- Trust: Establishing trust (for agents across organizations) will be key to adoption.

- Security: Authentication is provided by A2A, but there will need to be richer methods to prevent malicious agent interactions and ensure data privacy across organisation/national boundaries.

- Performance and Scalability: There will need to be means to manage latency and resource usage, especially when large number of agents are collaborating.

- Enterprise Readiness: Standardized means for usage management/pricing, SLOs/SLIs and SLAs are needed and even automated negotiation of these items

A separate open source project AGNTCY backed by Cisco, LangChain, Galileo and others is another possible way of enabling AI agents to work together. For example, it provides the Open Agent Schema Framework (OASF) as a standardized way for agents to describe their capabilities, inputs, and outputs.