The Intelligent

Observability

Data Platform

A new way to do observability

Three purpose-built layers, stacked to deliver outcomes nothing else can match.

Step 1:Send 100% of your data, full fidelity, no blindspots, always hot.

Up to 90% lower storage cost

Purpose-built columnar storage replaces expensive hot tiers.

10x faster search

Sub-second queries across petabytes — no rehydration.

1 year retention by default

Keep a full year of hot, queryable data, included.

No blind spots

Full coverage of logs, metrics, and traces — nothing dropped.

Petabyte scale

Scales horizontally without re-architecting your stack.

Full fidelity, no sampling

Every byte stored exactly as received.

Bronto loves scale. And fast answers.

Store 10x more data at up to 95% lower cost — and get faster MTTR, less downtime, and instant answers across every byte.

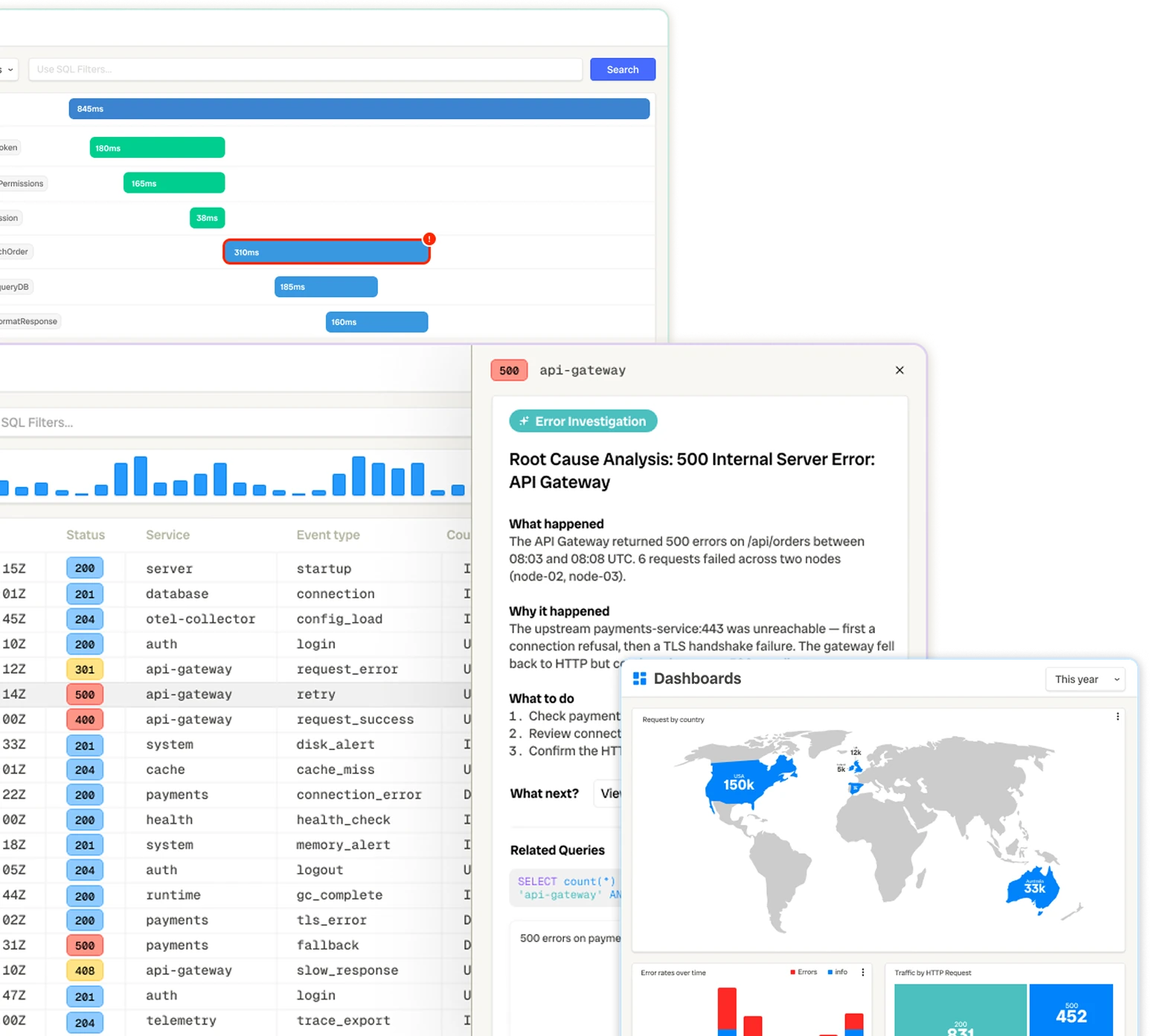

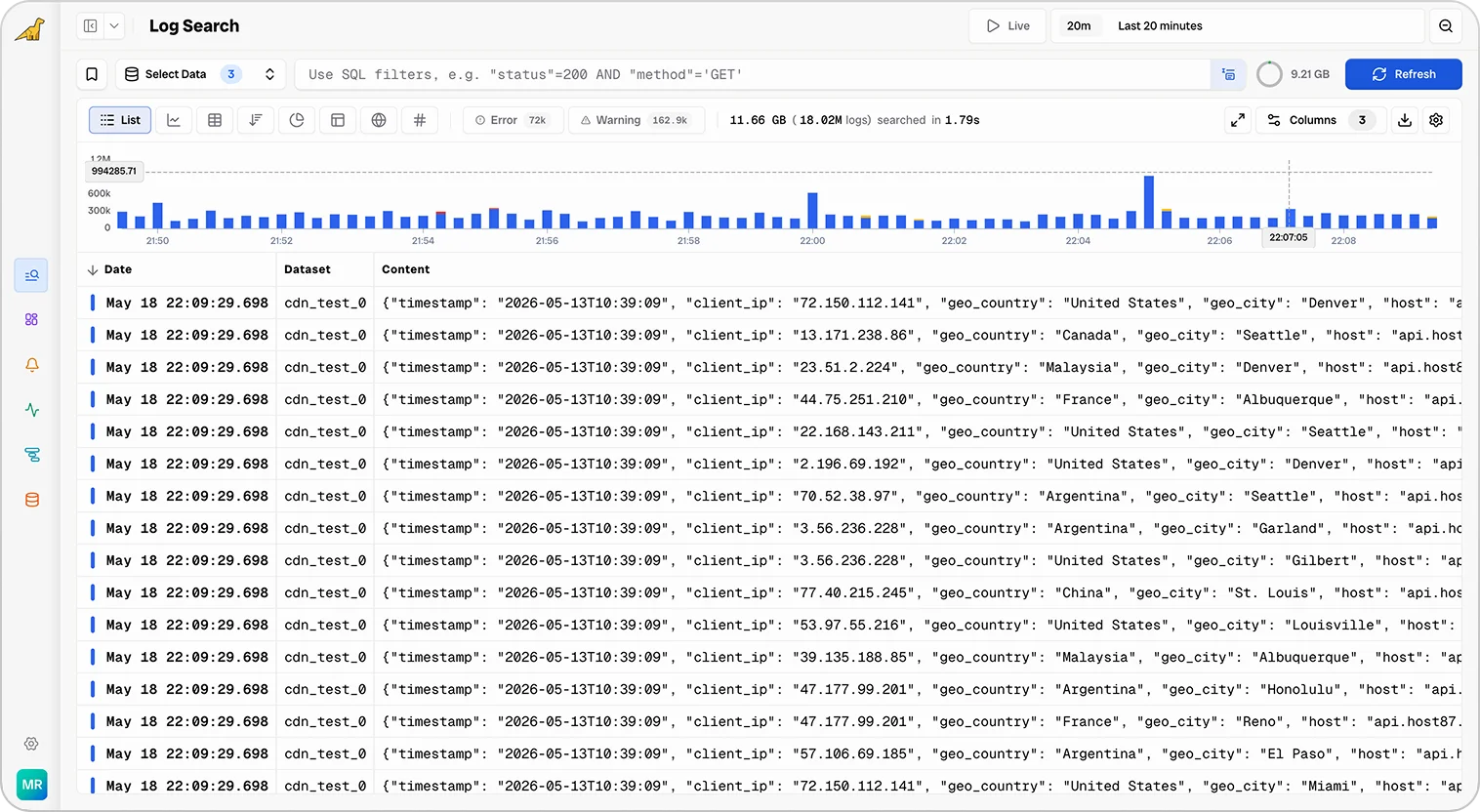

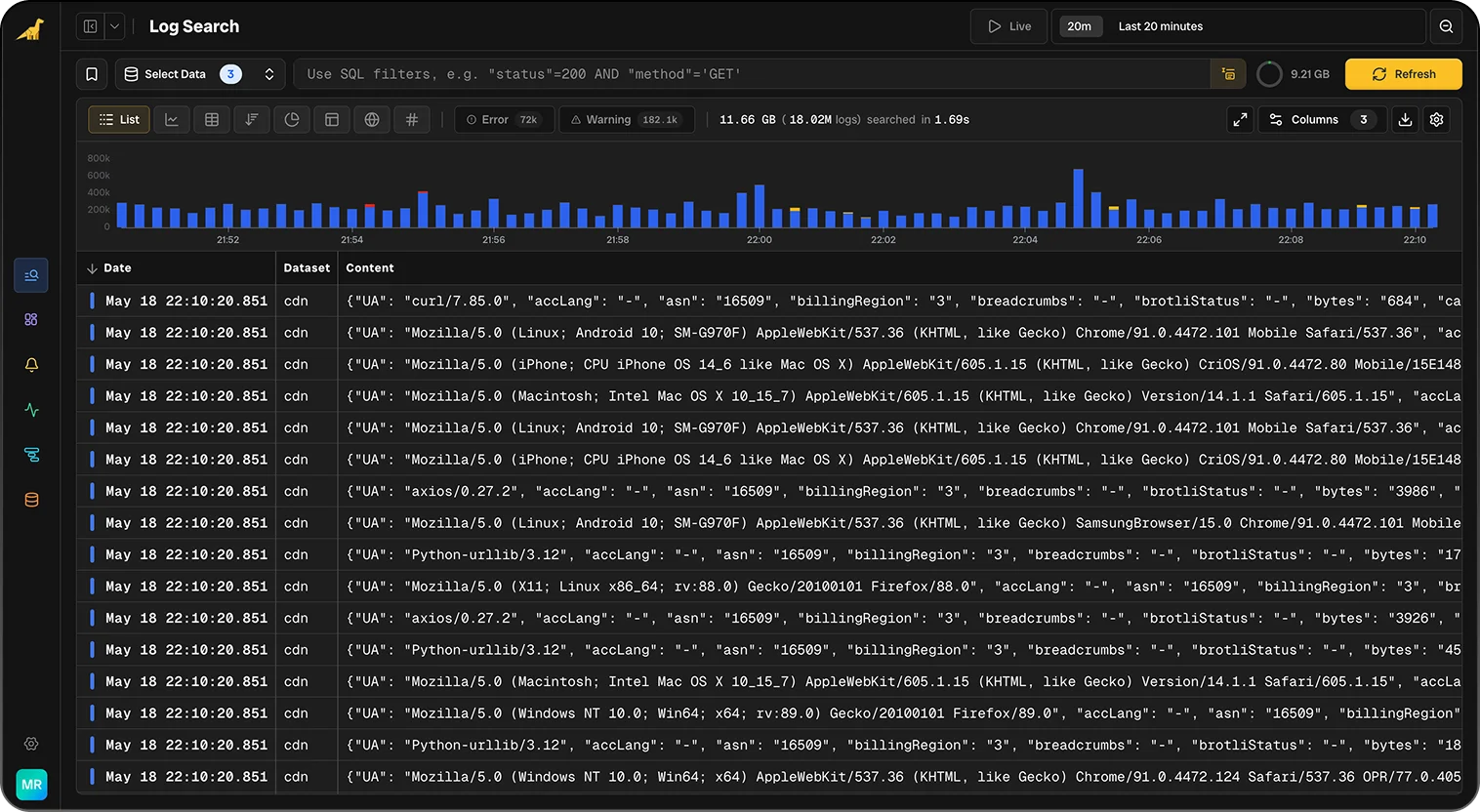

Search Your Logs Instantly

Filter terabytes of logs in sub-seconds with smart SQL autocomplete and keyboard-driven shortcuts.

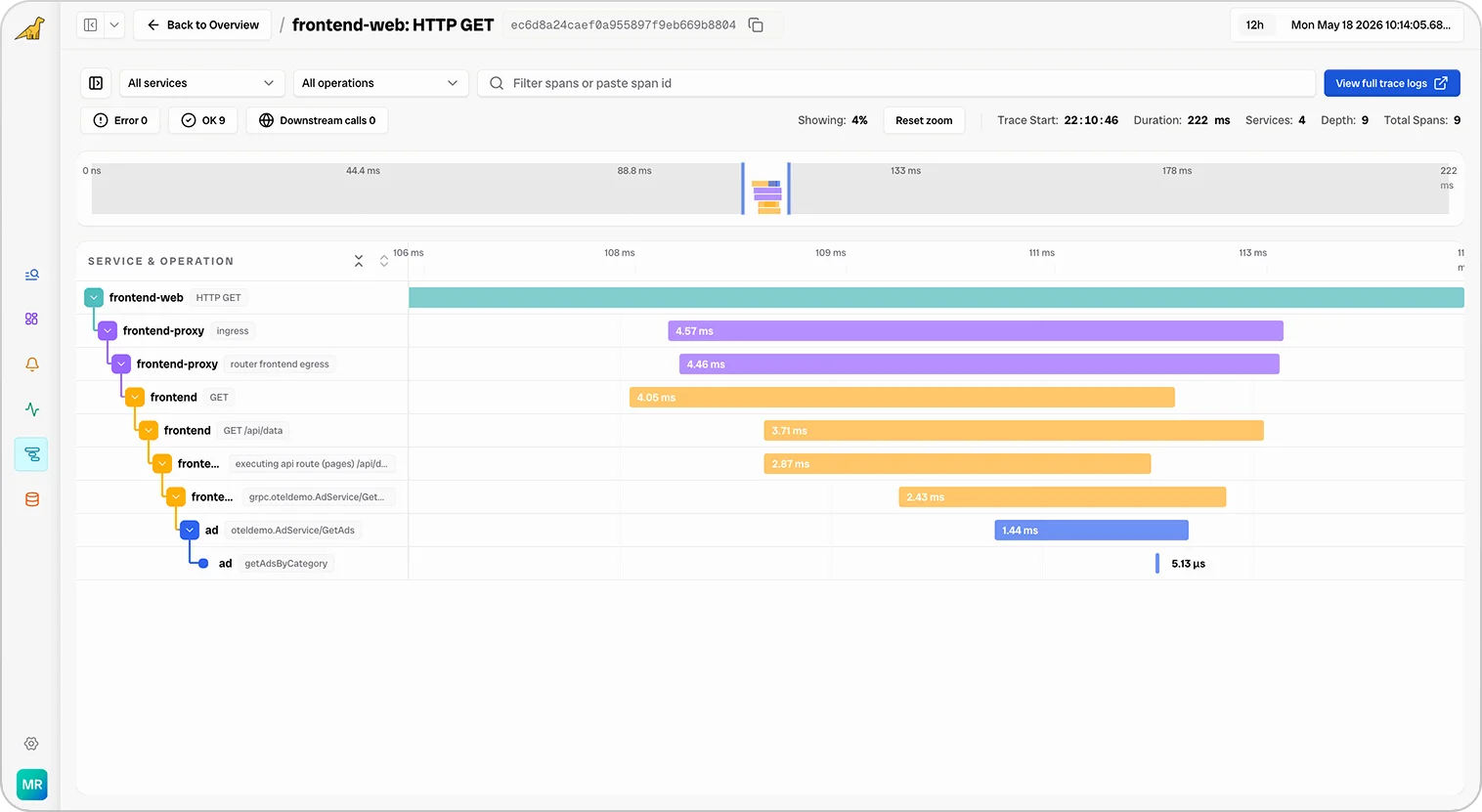

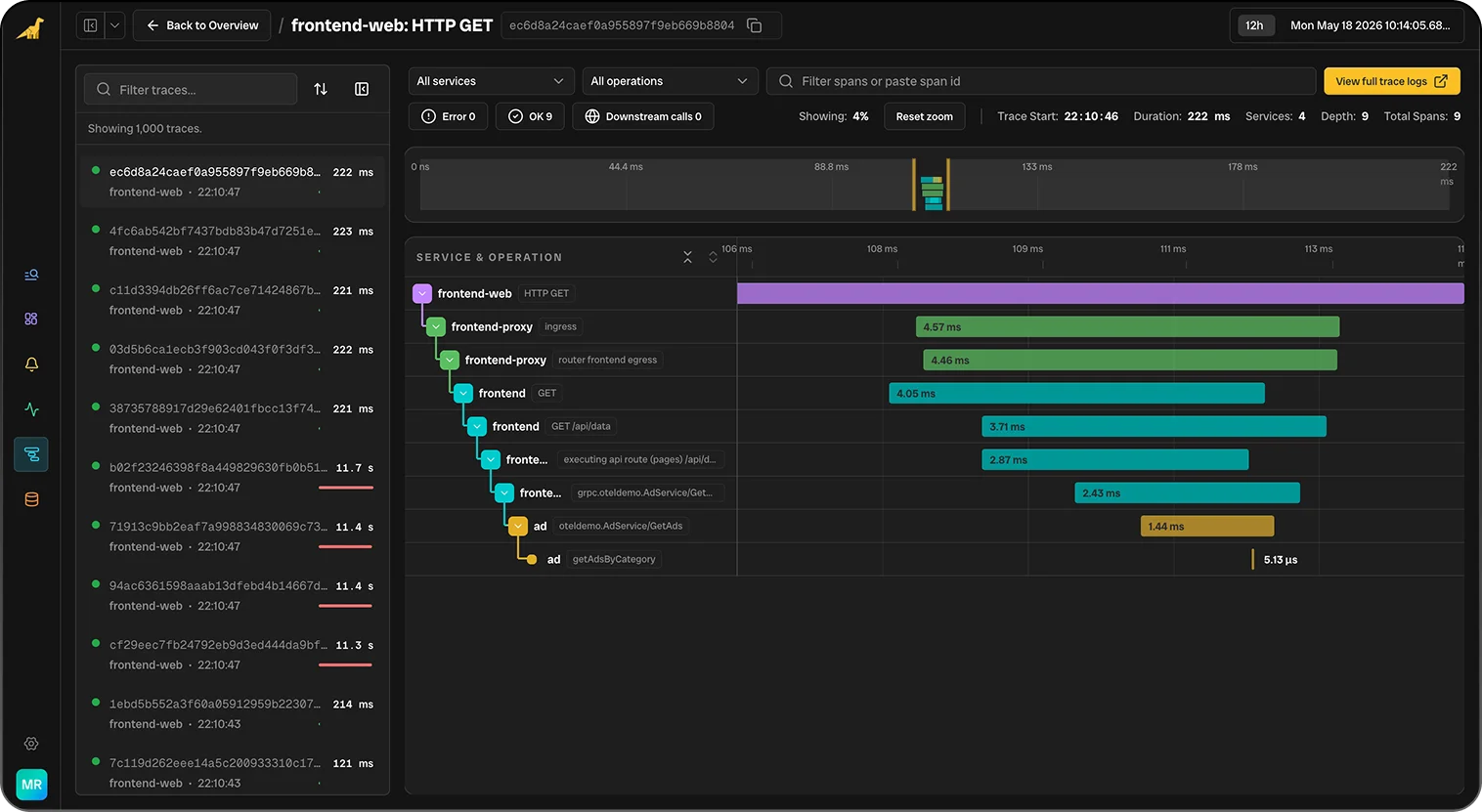

Trace Every Request End-to-End

Follow requests across every service, queue, and database. Pinpoint latency and errors with distributed tracing and waterfall view.

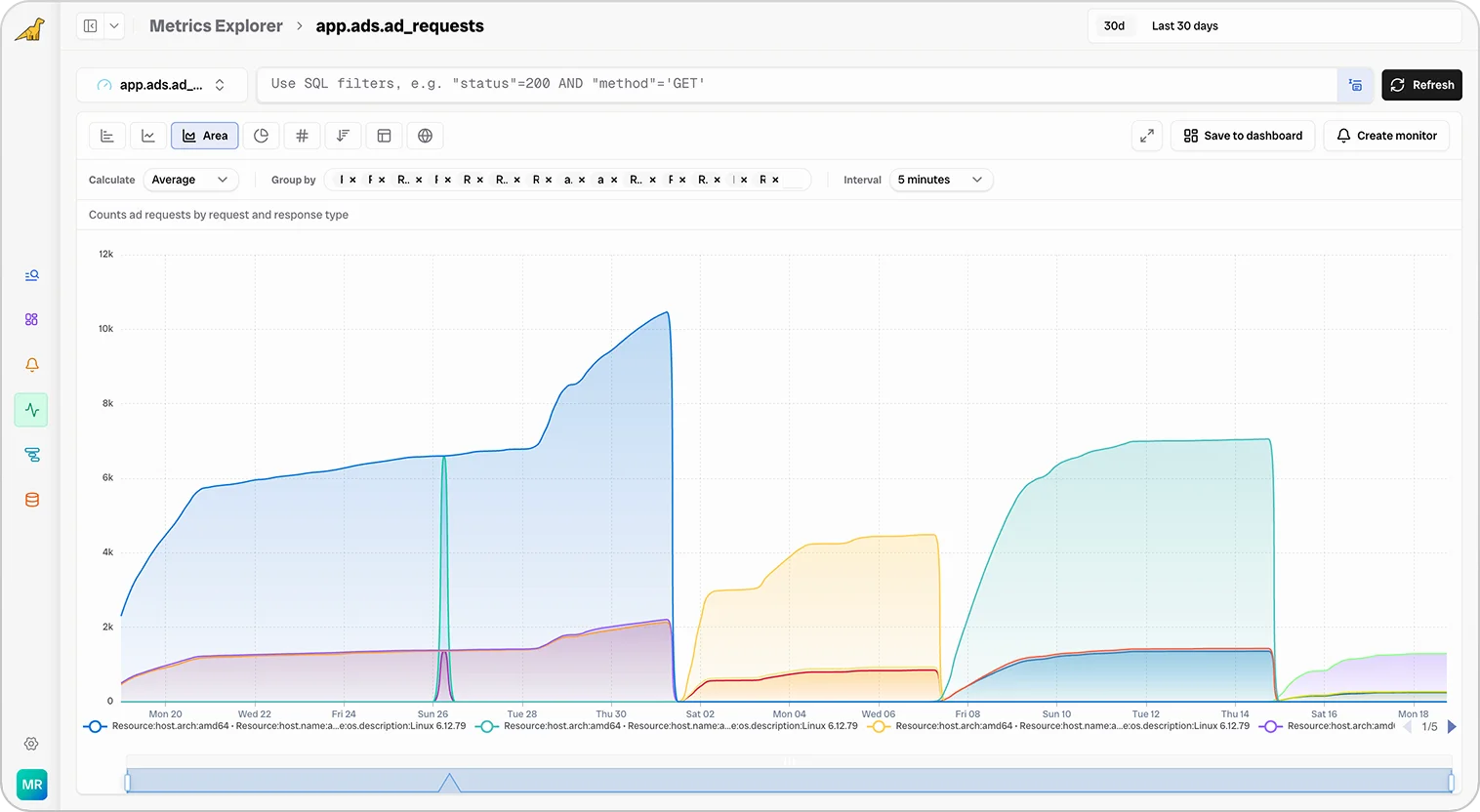

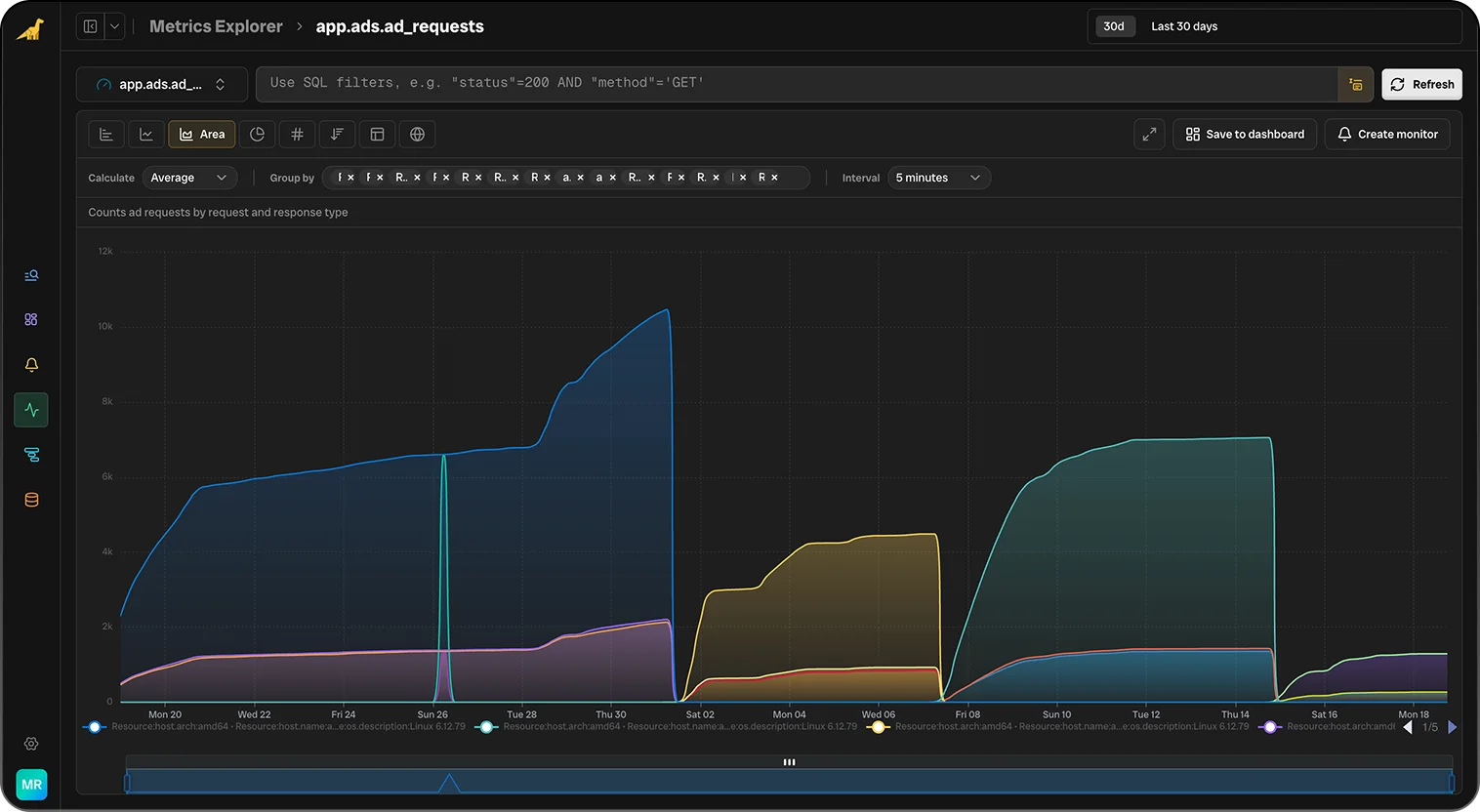

High-Cardinality Metrics, No Limits

Collect, store, and query infrastructure and application metrics with full granularity. 12-month retention out of the box, with sub-second queries even at high cardinality.

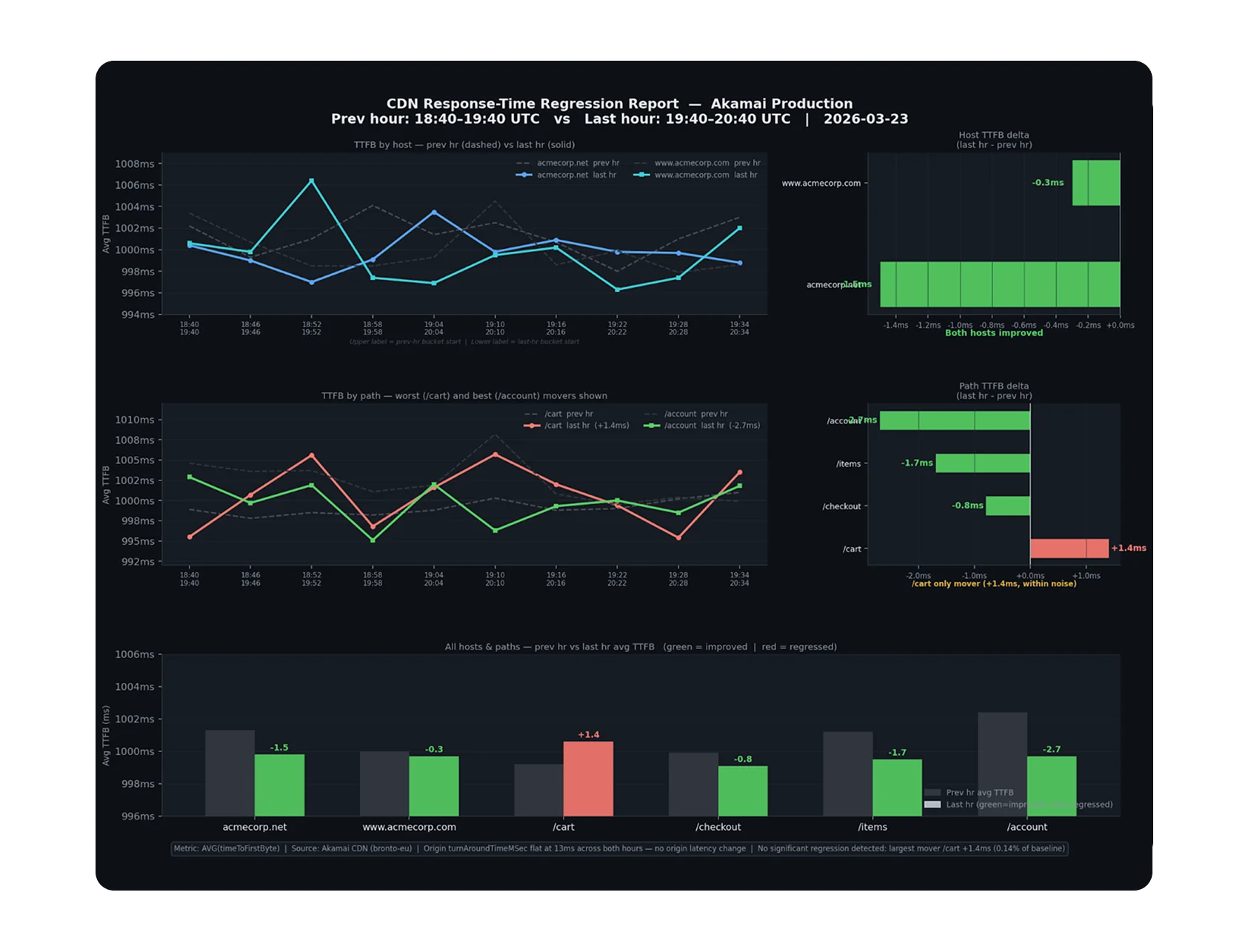

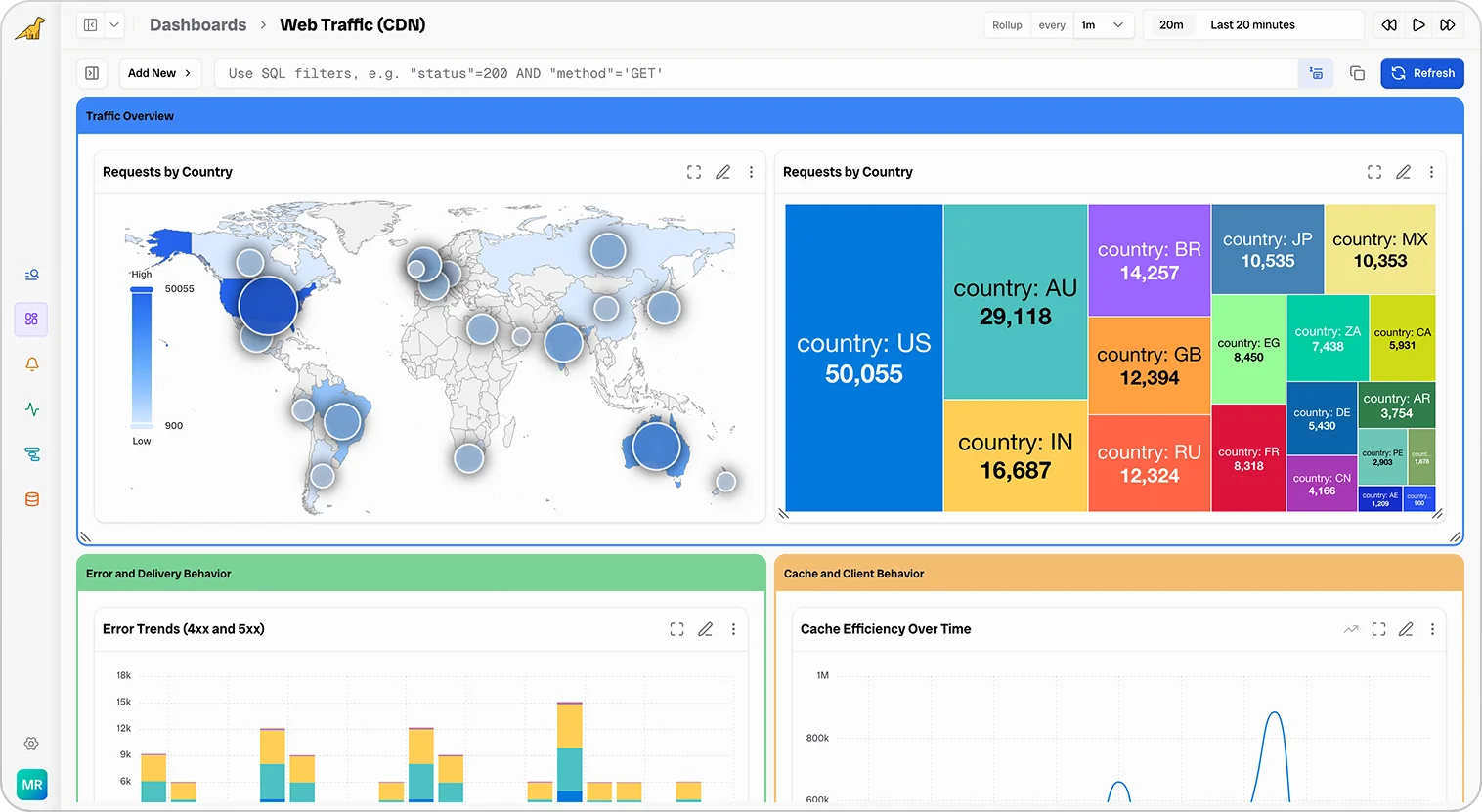

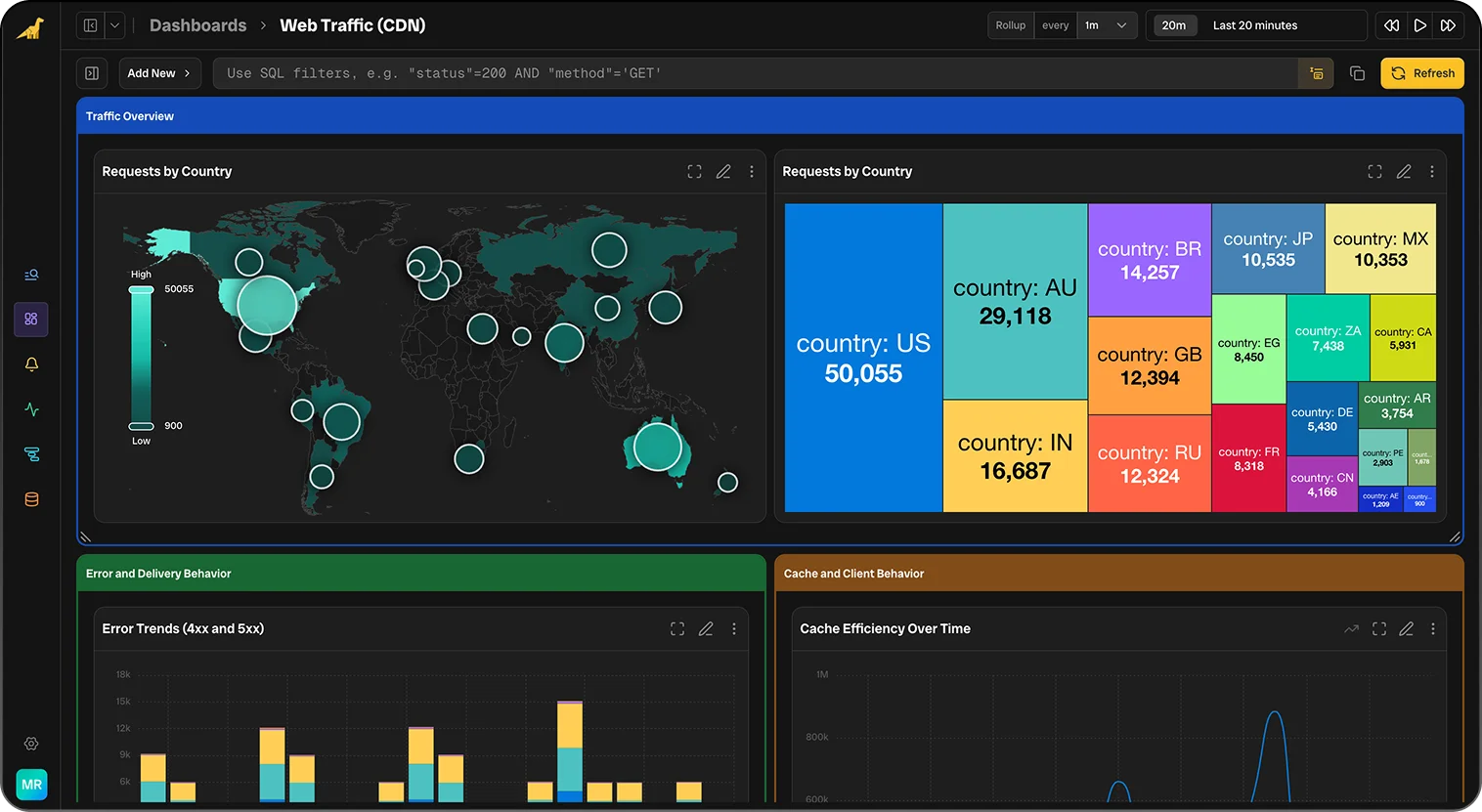

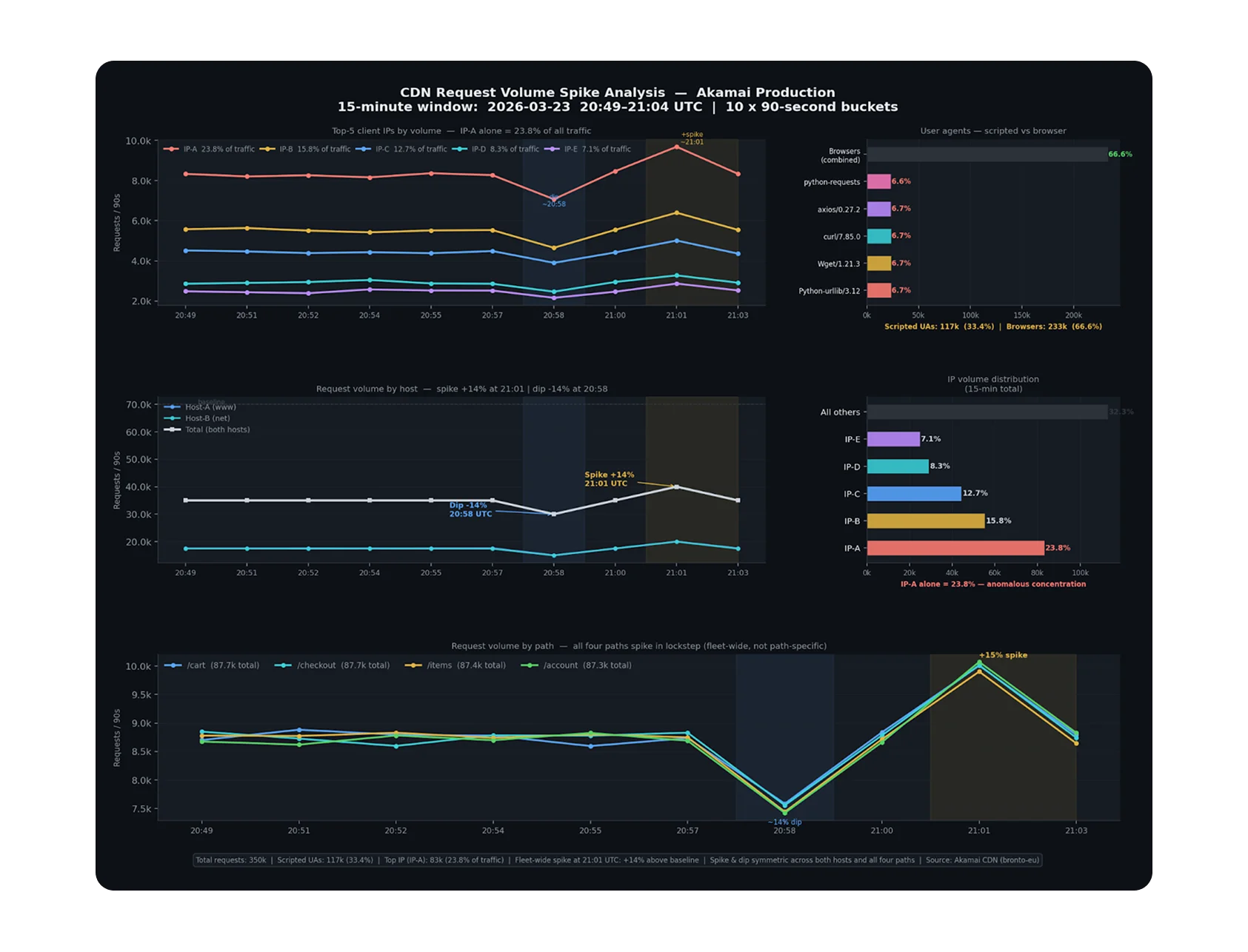

Insanely Quick Dashboards

Drag-and-drop dashboards rendered in seconds, even over petabytes. Start from suggested templates or build your own.

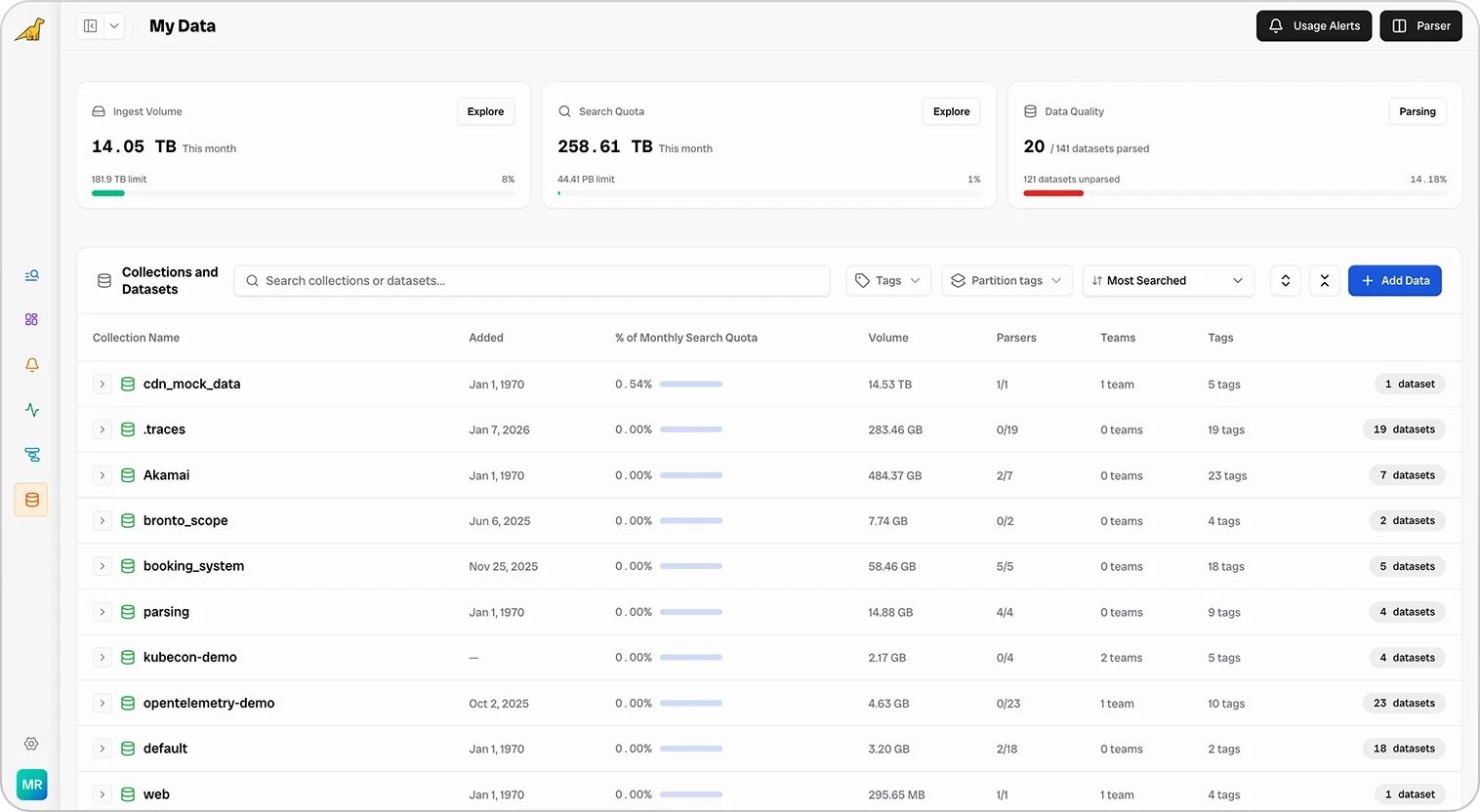

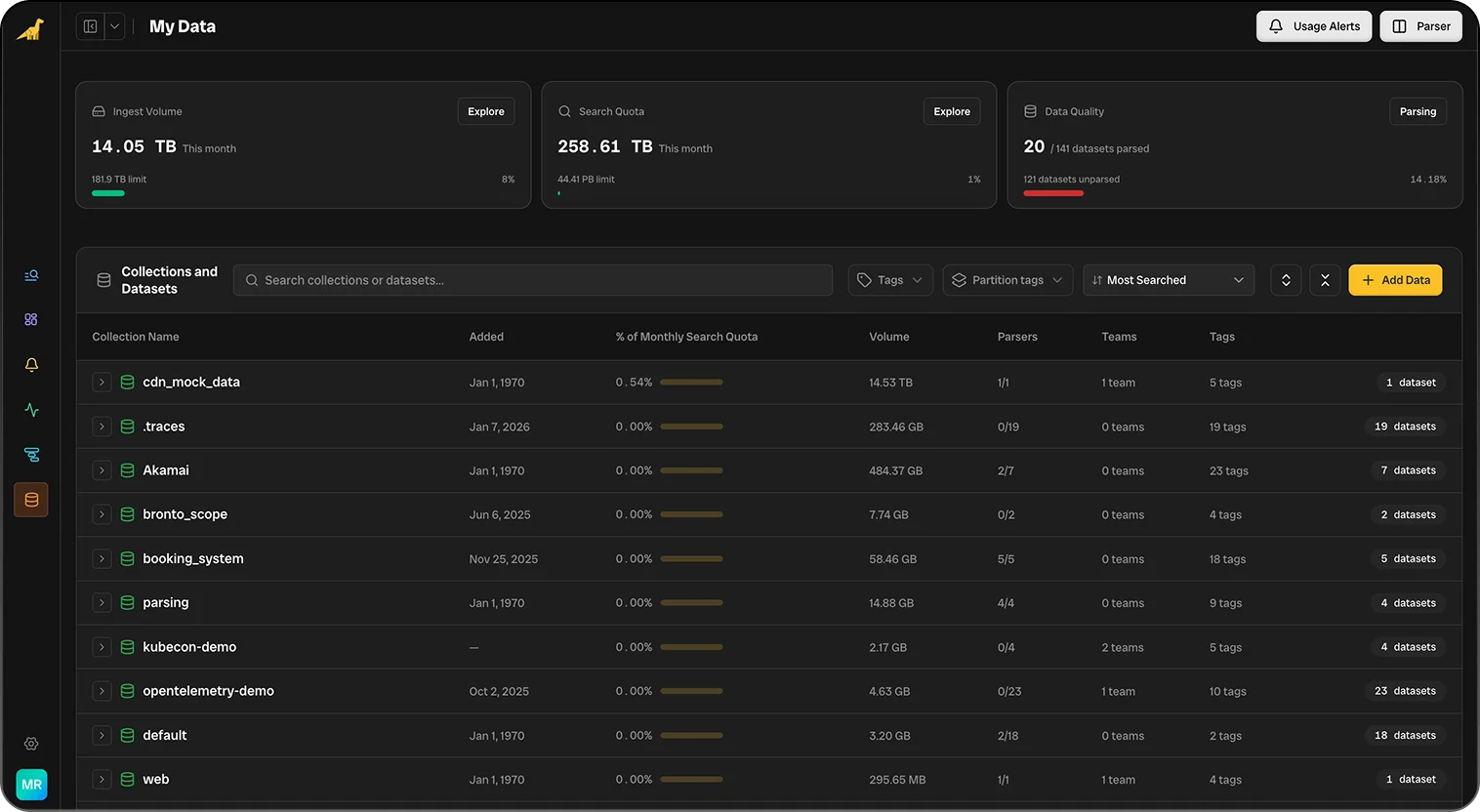

Full Transparency Into Usage & Cost

See exactly how your data is being used and by whom. Break down ingest, storage, and queries per team, service, or user, so you stay in control of cost and value.

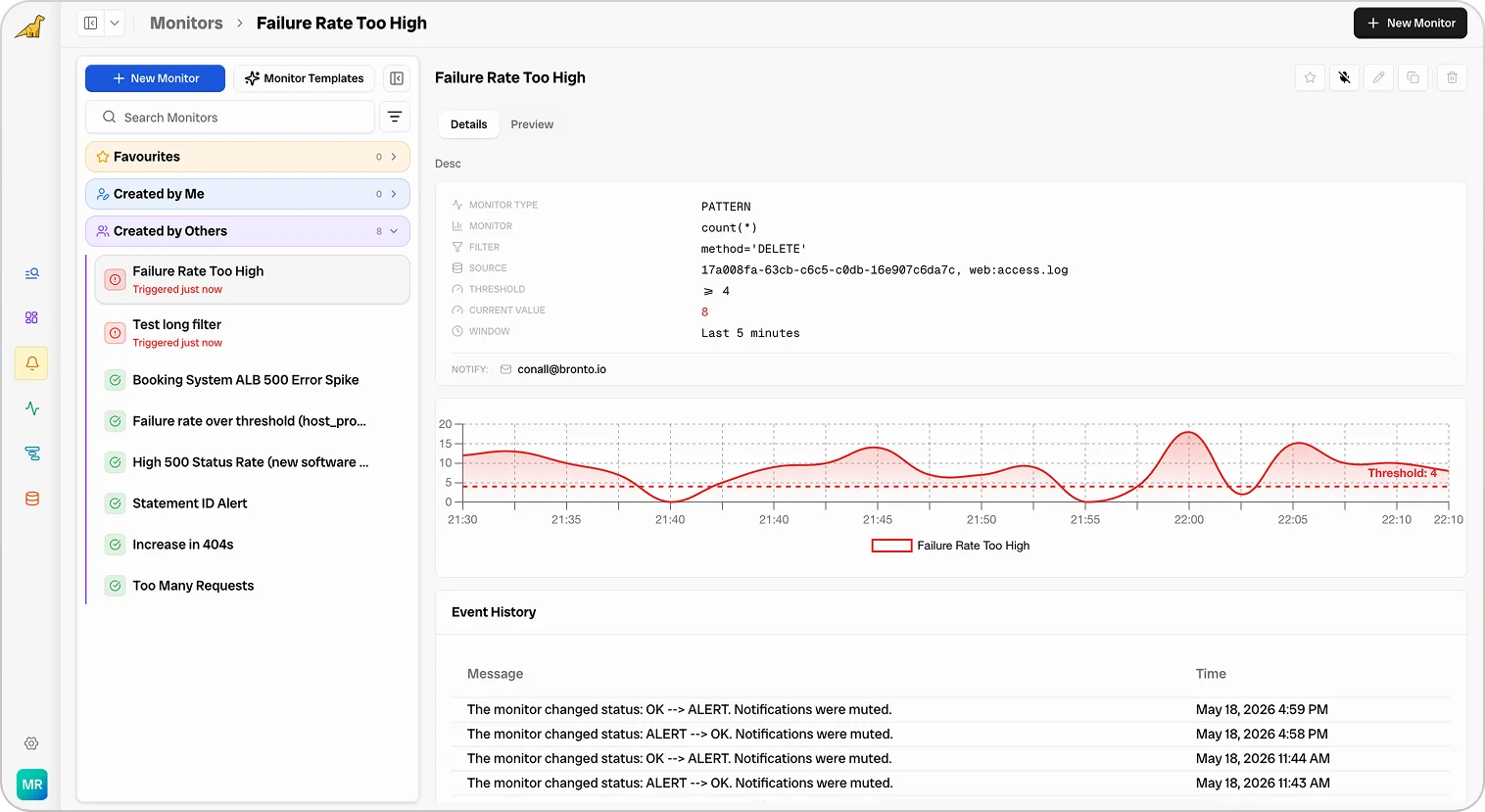

Intelligent Alerting & Reporting

AI-tuned alerts cut noise and surface what matters, with shareable reports that turn every incident and trend into clear, actionable insight.

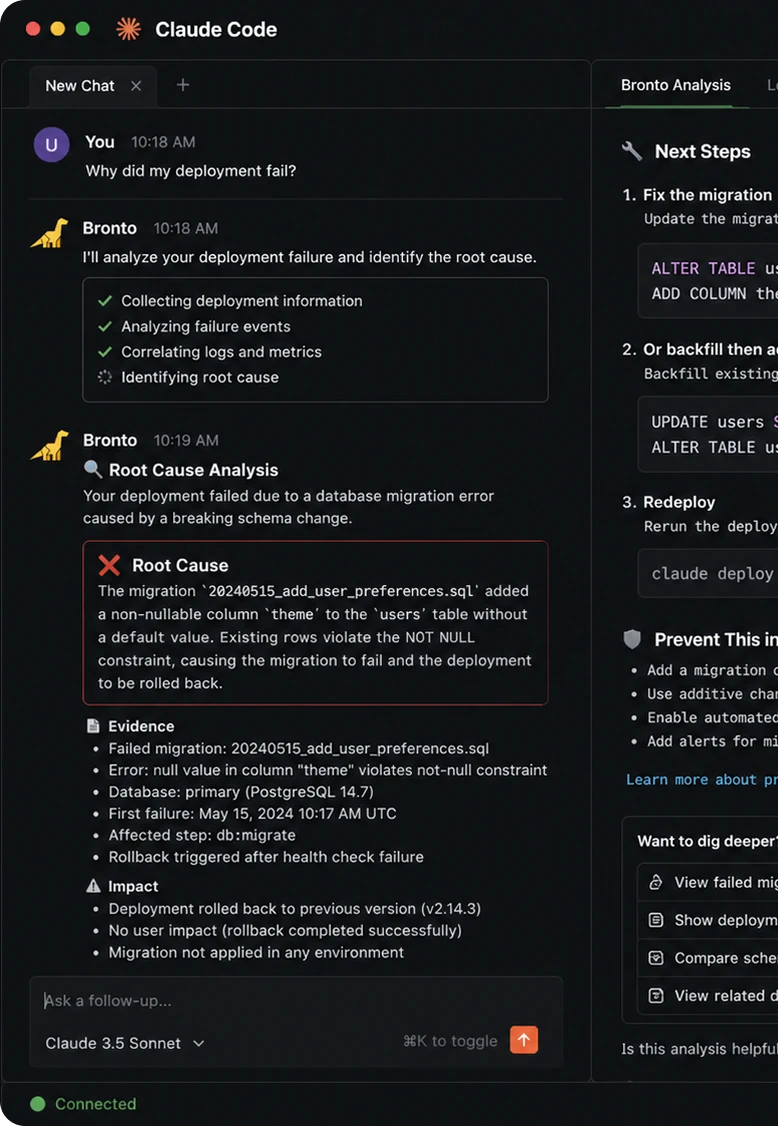

Optimised for your new workflow

Whether you live in your IDE, your terminal, or your own UI — Bronto plugs into the way you already work.

Bronto MCP

Plug Bronto straight into Claude, Cursor, and any MCP-aware agent so your logs, metrics, and traces become a first-class tool call.

REST & Query API

Programmatic access to every query, dashboard, and alert.

CLI & Terminal

Search, tail, and pipe Bronto data right from your shell.

Build Your Own UI

Use our APIs and MCP server with tools like Lovable and V0 to build internal tools, custom dashboards, and bespoke workflows on top of Bronto's data.

AI SREs

Let autonomous agents triage incidents, investigate anomalies, and surface root cause in seconds.

Your IDE & Coding Agents

Query your telemetry without leaving your IDE or coding agent of choice.

Get started in minutes.

Send data from anywhere with OTEL-native ingestion, get every field automatically structured, parsed, and searchable, and start exploring with pre-built dashboards, alerts, and searches from day one.

OTEL Native & Agent Agnostic

Ingest from OpenTelemetry, Fluentd, Fluent Bit, Vector, Datadog agents, and more. No lock-in.

All Your Data Structured & Parsed

Logs are parsed at ingestion using known formats or AI, so every field is structured and instantly searchable.

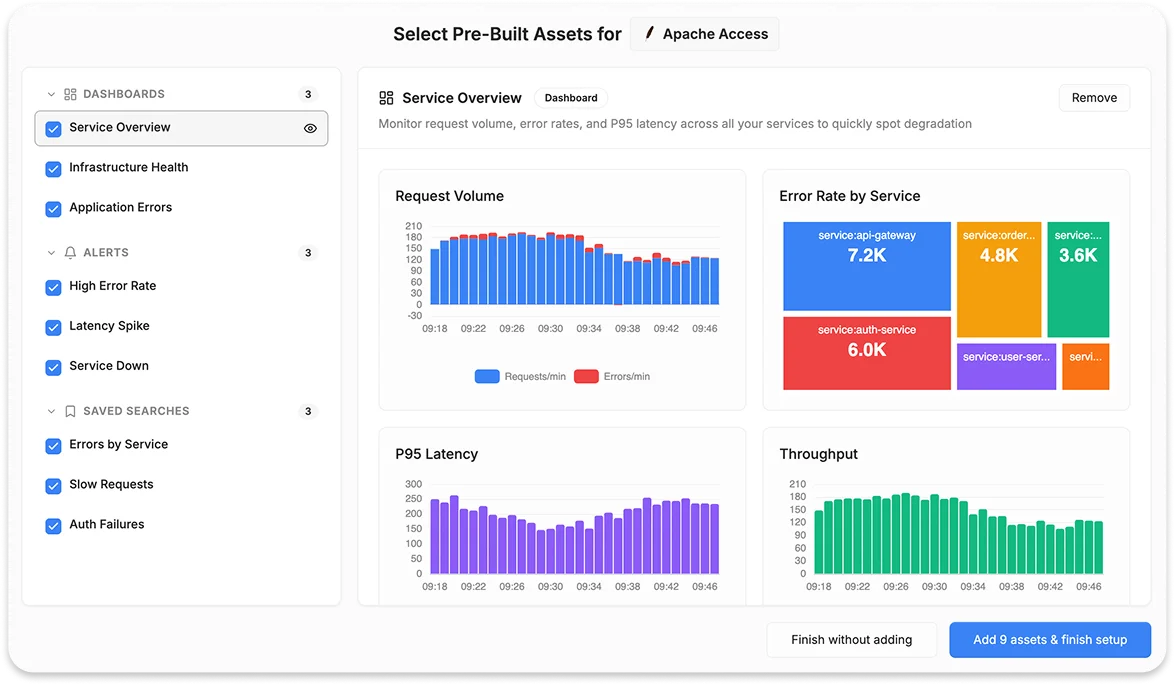

Pre-built Dashboards, Alerts & Searches

Start instantly with curated dashboards, alerts, and saved searches for common services and frameworks.

Chat to our team or get started with a free demo.

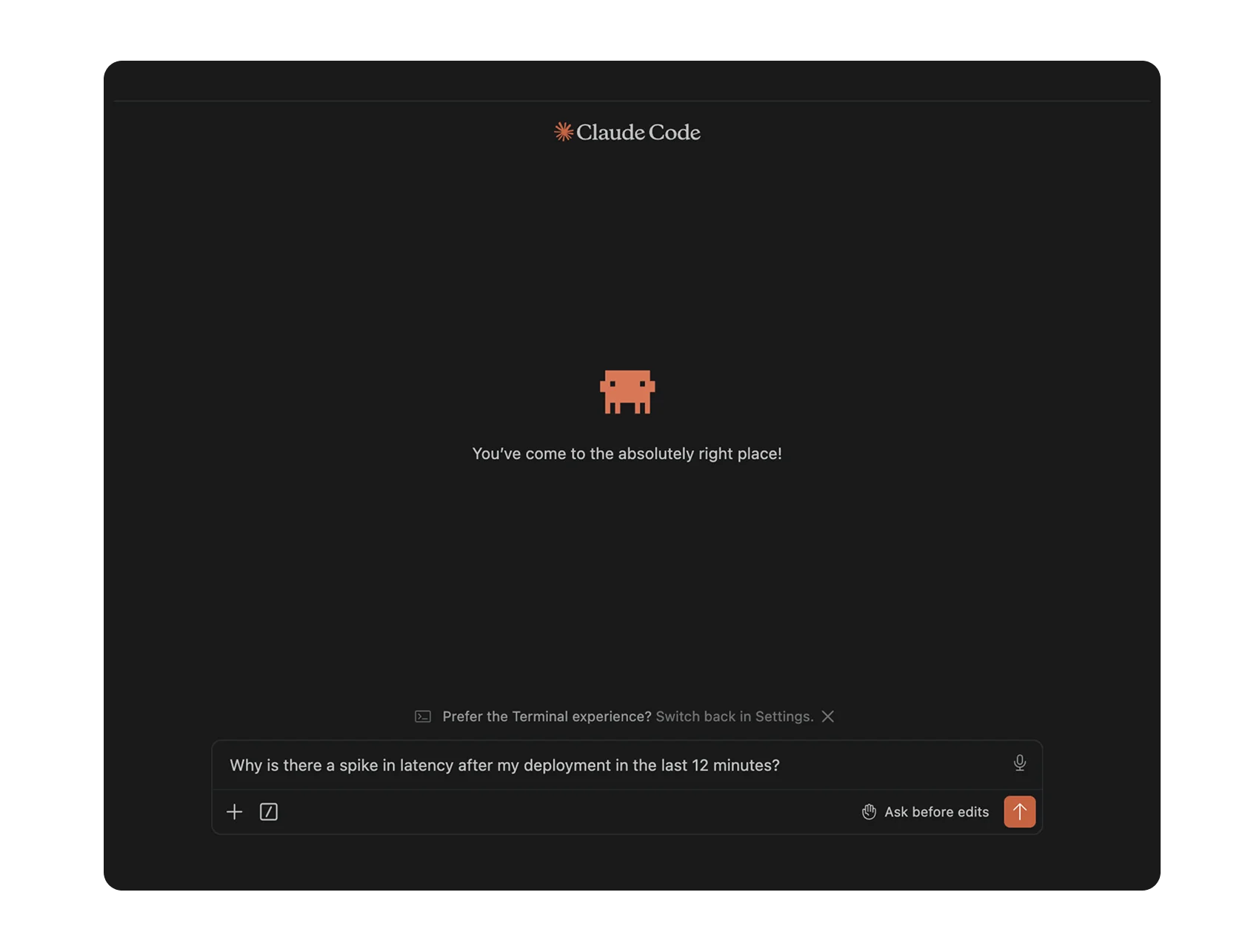

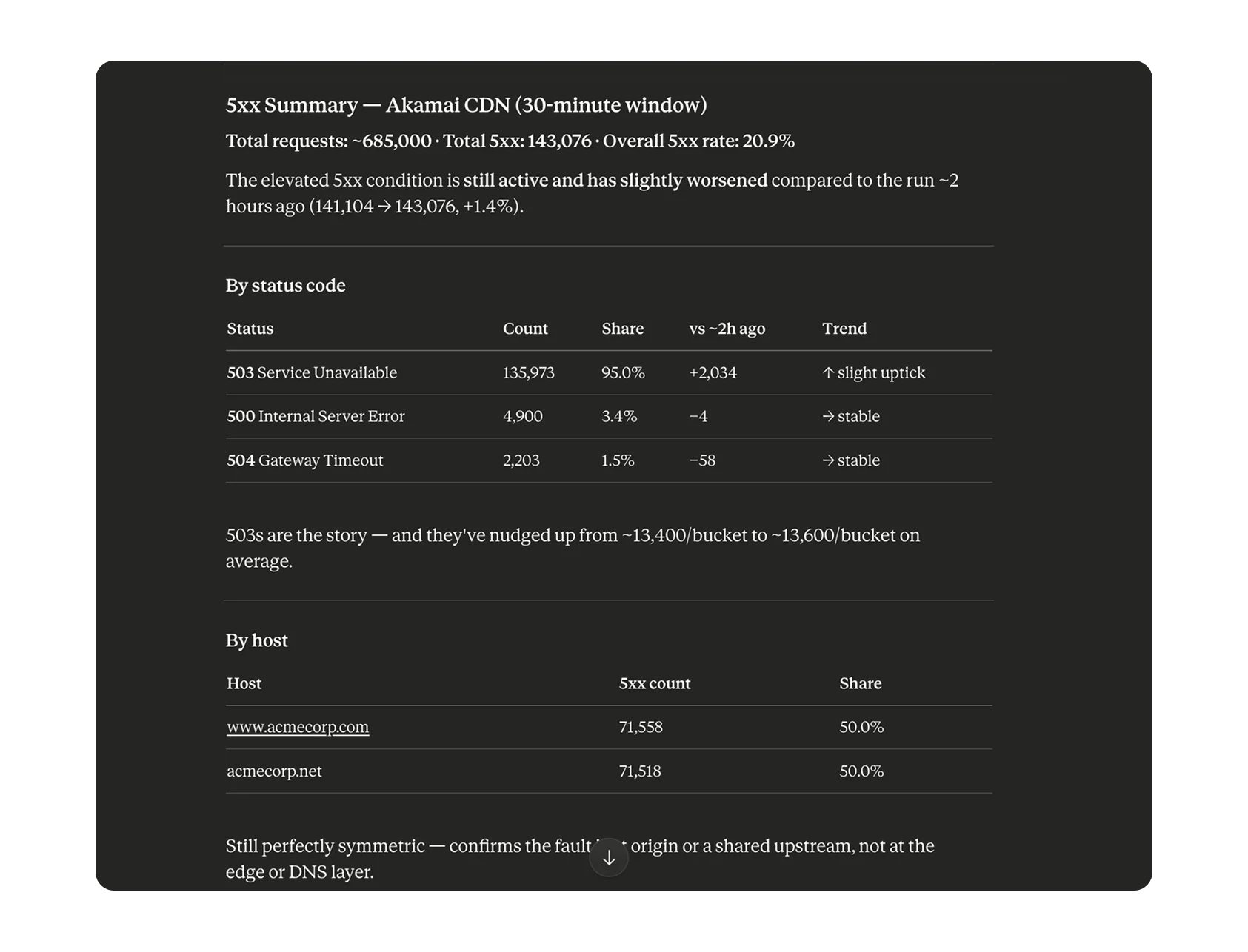

Bronto AI does the hard work, so you can keep building.

AI is embedded throughout the entire platform — removing toil, surfacing root causes, and helping your team find answers in seconds, not hours.

Root Cause Analysis Report

Generated: May 06, 2026 17:26:00 Window: Apr 29 → May 06

1. Analysis

Primary Issue

Sustained, high-volume backend receive errors (BG-ERROR-RECV) returning HTTP 503 across the API fleet.

Scope Description

- Total errors: ~7.1M across the 7-day window, 20 buckets

- Volume is consistently massive — ~343K to ~371K per bucket, indicating a chronic, systemic failure

- Signature (

BG-ERROR-RECV, HTTP 503) points to Fastly edge failing to receive a valid response from origin (api.host78.us.example.com) - Protocol anomaly:

request_protocol: "UDP"— invalid for HTTP API endpoint - Edge:

fastly_is_edge: true— failure at edge tier before app layer - Response time: 3643ms — requests stalling at backend before drop

- Trend: slight decline mid-window (Apr 30–May 02 ~360K) then rebounding sharply (May 05–06 ~371K) — worsening

2. Hypothesis & Next Steps

Probable Cause

Backend origin servers behind Fastly are failing to handle TCP connections reliably — likely origin pool exhaustion, a crashing upstream, or a network-level issue preventing edge nodes from completing the handshake. The UDP protocol field suggests either malformed requests forwarded to origin (misconfigured VCL) or a logging pipeline bug masking the true protocol.

Immediate Next Steps

- Investigate the UDP anomaly at Fastly. Review VCL config for

api.host78.us.example.com; pull real-time logs filtered tocache-iad-kcgs_*to check if errors concentrate on specific PoPs (especially IAD). - Assess origin server health. Check CPU, memory, file descriptors, and connection queue depth. Review app logs from May 05 17:00 → May 06 17:26 for crash loops, OOM events, or dependency failures.

Report confidence: High — volume consistency and error signature indicate a reproducible failure mode requiring parallel edge and origin investigation.

Related queries

- service:api status:503 host:"api.host78.us.example.com"

- fastly_is_edge:true error_code:BG-ERROR-RECV

- pop:cache-iad-kcgs_* response_time:>3000

[Bronto] Investigation Report · New org created in US

A new organization was created in the US which triggered the "New org created in US" monitor. Trial setup completed successfully and no anomalies or service issues were detected.

The monitoring workflow detected the creation of a new organization in the US region and automatically generated an investigation report. The system successfully created the organization, customer account, user account, and trial limits without interruption.

This investigation was triggered intentionally by the "New org created in US" monitor. The monitor notifies the team whenever a new trial organization is provisioned in production. The behavior matches expected onboarding activity.

No immediate action is required. Recommended follow-up: validate welcome email delivery, monitor early ingestion activity, and confirm billing and trial entitlements remain correctly applied over the next 24 hours.

| # | timestamp | level | service | method | path | status | duration | trace_id | user_id | message |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2026-05-06T15:50:29.023Z | INFO | payment-svc | PUT | /api/v1/orders | 201 | 89 | ae082847-7c3a-41 | usr_7cnszl | Request processed successfully |

| 2 | 2026-05-06T15:50:32.023Z | INFO | api-gateway | PUT | /api/v1/payments | 400 | 791 | 773c4702-1d37-48 | usr_6xjqtf | Authentication token validated |

| 3 | 2026-05-06T15:50:35.023Z | ERROR | payment-svc | GET | /api/v1/orders | 200 | 652 | cb18b186-cc39-49 | usr_st05bj | Request processed successfully |

| 4 | 2026-05-06T15:50:38.023Z | DEBUG | payment-svc | POST | /api/v1/auth/login | 400 | 851 | c51e1053-4d29-41 | usr_zeg1z5 | Rate limit threshold approaching |

| 5 | 2026-05-06T15:50:41.023Z | WARN | auth-service | DELETE | /api/v1/health | 500 | 851 | 6cbfce21-94aa-49 | usr_rix8ib | Rate limit threshold approaching |

| 6 | 2026-05-06T15:50:44.023Z | DEBUG | order-service | DELETE | /api/v1/auth/login | 500 | 329 | 991c6b2e-290e-4e | usr_tf7nqi | Request processed successfully |

| 7 | 2026-05-06T15:50:47.023Z | ERROR | auth-service | DELETE | /api/v1/payments | 400 | 207 | 21bc8db6-faca-4a | usr_4yhgbp | Rate limit threshold approaching |

| 8 | 2026-05-06T15:50:50.023Z | INFO | api-gateway | POST | /api/v1/orders | 201 | 142 | f47ac10b-58cc-4a | usr_k3m9wp | Authentication token validated |

| 9 | 2026-05-06T15:50:53.023Z | WARN | payment-svc | GET | /api/v1/payments | 200 | 998 | b7d2c891-3f12-49 | usr_8nv2qs | Request processed successfully |

| 10 | 2026-05-06T15:50:56.023Z | ERROR | auth-service | PUT | /api/v1/health | 500 | 412 | a92e0c14-9b88-4d | usr_pl4xtb | Rate limit threshold approaching |

| 11 | 2026-05-06T15:50:59.023Z | INFO | order-service | POST | /api/v1/orders | 201 | 76 | 5e1f8a73-2c44-41 | usr_q9c1zr | Request processed successfully |

| 12 | 2026-05-06T15:51:02.023Z | DEBUG | payment-svc | GET | /api/v1/payments | 200 | 583 | c2d9b6e0-7f33-48 | usr_h7d3yf | Authentication token validated |

| 13 | 2026-05-06T15:51:05.023Z | INFO | api-gateway | DELETE | /api/v1/auth/login | 400 | 921 | e8b4f3a1-4d28-42 | usr_b2x8mn | Rate limit threshold approaching |

| 14 | 2026-05-06T15:51:08.023Z | WARN | auth-service | PUT | /api/v1/health | 500 | 305 | 3a7c9d2e-1b55-4f | usr_v4w6ks | Request processed successfully |

| 15 | 2026-05-06T15:51:11.023Z | ERROR | payment-svc | POST | /api/v1/orders | 201 | 188 | 8f2e1a6c-9c77-43 | usr_j1n5pq | Authentication token validated |

| 16 | 2026-05-06T15:51:14.023Z | INFO | order-service | GET | /api/v1/payments | 200 | 754 | d4b8e7f0-3a99-45 | usr_t8r2lc | Request processed successfully |

| 17 | 2026-05-06T15:51:17.023Z | DEBUG | api-gateway | PUT | /api/v1/auth/login | 400 | 432 | 1c5b9e8d-6e22-4a | usr_g6f9hd | Rate limit threshold approaching |

| 18 | 2026-05-06T15:51:20.023Z | INFO | payment-svc | DELETE | /api/v1/health | 500 | 611 | 9b3d6f2a-8c11-49 | usr_m3e7vb | Request processed successfully |

| 19 | 2026-05-06T15:51:23.023Z | WARN | auth-service | POST | /api/v1/orders | 201 | 95 | 7e2a4b1c-5d66-48 | usr_y5u1xa | Authentication token validated |

| 20 | 2026-05-06T15:51:26.023Z | ERROR | order-service | GET | /api/v1/payments | 200 | 488 | 2f8c1d9e-4b33-47 | usr_o2k4wn | Request processed successfully |

Loved by teams around the world

“Bronto fundamentally changed how we think about logging. We went from treating logs as a necessary evil — expensive, unreliable, and limited — to making them a key asset. The combination of unlimited retention, lightning-fast search, and AI-powered insights means we catch issues much earlier, often before customers notice them. But the real transformation is cultural: every team now has access to the data they need, when they need it.”

Paul Griffin

Head of Platform Engineering

“Bronto's long-term always-hot days mean we can access data with sub-second search, whether it's from last week or last year. This is huge for our security and AI strategy as we continue to revolutionize how we work at Nitro. For AI-powered analysis of our logs, data availability is key — it's just not possible with only a few days of retention. Bronto has become a key part of our toolkit when we think of log data and how it will play an important role for engineering, security and product teams going forward.”

John Fitzpatrick

CTO

“Tasks that used to take 15 minutes are now almost instant. I find myself using Bronto much more than the previous tool simply because it's faster and more responsive. I can quickly ask questions and get immediate answers, which makes it easier to explore insights that were previously hard to uncover. With a year's worth of data retention instead of just 90 days, I can now identify annual trends and patterns I couldn't see before. This extended retention opens the door for much deeper analytics.”

Brian Elliott

Senior Engineering Manager, SaaS Services

“It's a night and day difference to our previous logging provider. Bronto typically returns results in seconds, while our old vendor took over 30 minutes and frequently failed to render visualizations. Bronto has come a long way with everything from usage to UI. Our users are getting what they're looking for.”

Jaymin Patel

Team Lead

“The TCO reduction is significant — we're saving hundreds of thousands annually. But the real value is in new and significantly enhanced capabilities. A significant reduction in time to root cause, investigating issues from months ago, having logs actually available during incidents — these aren't just improvements, they're game-changers. Our SysOps team now get to work on platform innovation instead of keeping Graylog alive.”

Aodh O'Mahony

Engineering Manager

“Bronto fundamentally changed how we think about logging. We went from treating logs as a necessary evil — expensive, unreliable, and limited — to making them a key asset. The combination of unlimited retention, lightning-fast search, and AI-powered insights means we catch issues much earlier, often before customers notice them. But the real transformation is cultural: every team now has access to the data they need, when they need it.”

Paul Griffin

Head of Platform Engineering

“Bronto's long-term always-hot days mean we can access data with sub-second search, whether it's from last week or last year. This is huge for our security and AI strategy as we continue to revolutionize how we work at Nitro. For AI-powered analysis of our logs, data availability is key — it's just not possible with only a few days of retention. Bronto has become a key part of our toolkit when we think of log data and how it will play an important role for engineering, security and product teams going forward.”

John Fitzpatrick

CTO

“Tasks that used to take 15 minutes are now almost instant. I find myself using Bronto much more than the previous tool simply because it's faster and more responsive. I can quickly ask questions and get immediate answers, which makes it easier to explore insights that were previously hard to uncover. With a year's worth of data retention instead of just 90 days, I can now identify annual trends and patterns I couldn't see before. This extended retention opens the door for much deeper analytics.”

Brian Elliott

Senior Engineering Manager, SaaS Services

“It's a night and day difference to our previous logging provider. Bronto typically returns results in seconds, while our old vendor took over 30 minutes and frequently failed to render visualizations. Bronto has come a long way with everything from usage to UI. Our users are getting what they're looking for.”

Jaymin Patel

Team Lead

“The TCO reduction is significant — we're saving hundreds of thousands annually. But the real value is in new and significantly enhanced capabilities. A significant reduction in time to root cause, investigating issues from months ago, having logs actually available during incidents — these aren't just improvements, they're game-changers. Our SysOps team now get to work on platform innovation instead of keeping Graylog alive.”

Aodh O'Mahony

Engineering Manager

“Bronto fundamentally changed how we think about logging. We went from treating logs as a necessary evil — expensive, unreliable, and limited — to making them a key asset. The combination of unlimited retention, lightning-fast search, and AI-powered insights means we catch issues much earlier, often before customers notice them. But the real transformation is cultural: every team now has access to the data they need, when they need it.”

Paul Griffin

Head of Platform Engineering

“Bronto's long-term always-hot days mean we can access data with sub-second search, whether it's from last week or last year. This is huge for our security and AI strategy as we continue to revolutionize how we work at Nitro. For AI-powered analysis of our logs, data availability is key — it's just not possible with only a few days of retention. Bronto has become a key part of our toolkit when we think of log data and how it will play an important role for engineering, security and product teams going forward.”

John Fitzpatrick

CTO

“Tasks that used to take 15 minutes are now almost instant. I find myself using Bronto much more than the previous tool simply because it's faster and more responsive. I can quickly ask questions and get immediate answers, which makes it easier to explore insights that were previously hard to uncover. With a year's worth of data retention instead of just 90 days, I can now identify annual trends and patterns I couldn't see before. This extended retention opens the door for much deeper analytics.”

Brian Elliott

Senior Engineering Manager, SaaS Services

“It's a night and day difference to our previous logging provider. Bronto typically returns results in seconds, while our old vendor took over 30 minutes and frequently failed to render visualizations. Bronto has come a long way with everything from usage to UI. Our users are getting what they're looking for.”

Jaymin Patel

Team Lead

“The TCO reduction is significant — we're saving hundreds of thousands annually. But the real value is in new and significantly enhanced capabilities. A significant reduction in time to root cause, investigating issues from months ago, having logs actually available during incidents — these aren't just improvements, they're game-changers. Our SysOps team now get to work on platform innovation instead of keeping Graylog alive.”

Aodh O'Mahony

Engineering Manager